As you know, configuring robot.txt is important to any website that is working on a site’s SEO. Particularly, when you configure the sitemap to allow search engines to index your store, it is necessary to give web crawlers the instructions in the robot.txt file to avoid indexing the disallowed sites. The robot.txt file, that resides in the root of your Magento installation, is directive that search engines such as Google, Yahoo, Bing can recognize and track easily. In this post, I will introduce the guides to configure the robot.txt file so that it works well with your site.

Table of contents:

- What is Robots.txt in Magento 2?

- Steps to Configure Magento 2 robots.txt file

- Magento 2 Robots.txt Examples

- Magento 2 Default Robots.txt

- More Robots.txt examples

- Common Web crawlers (Bots)

What is Robots.txt in Magento 2?

The robots.txt file instructs web crawlers to know where to index your website and where to skip. Defining this website robots – website crawlers relationship will help you optimize your website’s ranking. Sometimes you need it to identify and avoid indexing particular parts, which can be done by configuration. It is your decision to use the default settings or set custom instructions for each search engine.

Steps to Configure Magento 2 robots.txt file

Please follow this step-by-step guide to configure your robots.txt file in Magento 2:

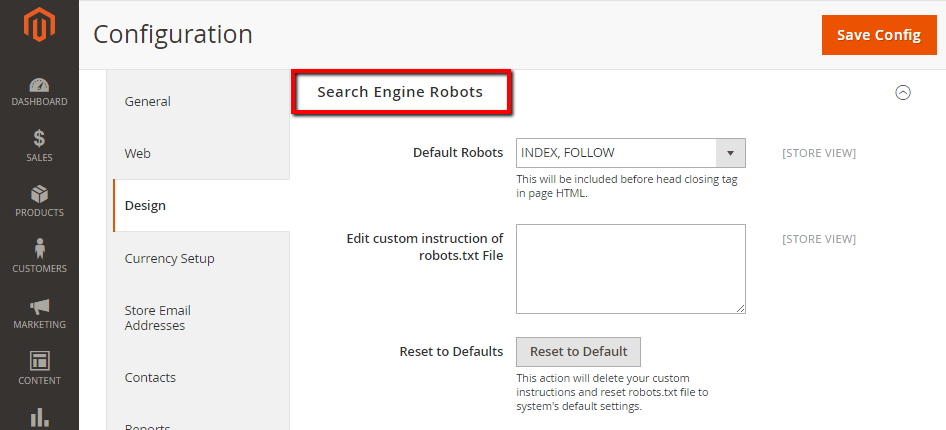

- On the Admin panel, click

Stores. In theSettingssection, selectConfiguration. - Select

DesignunderGeneralin the panel on the left - Open the

Search Engine Robotssection, and continue with following:- In

Default Robots, select one of the following:- INDEX, FOLLOW

- NOINDEX, FOLLOW

- INDEX, NOFOLLOW

- NOINDEX, NOFOLLOW

- In the

Edit Custom instruction of robots.txt Filefield, enter custom instructions if needed. - In the

Reset to Defaultsfield, click onReset to Defaultbutton if you need to restore the default instructions.

- In

- When complete, click

Save Config.

Magento 2 Robots.txt Examples

You are also able to hide your pages from the website crawlers by setting custom instructions as follows:

- Allows Full Access

User-agent:*

Disallow:

- Disallows Access to All Folders

User-agent:*

Disallow: /

Magento 2 Default Robots.txt

Disallow: /lib/

Disallow: /*.php$

Disallow: /pkginfo/

Disallow: /report/

Disallow: /var/

Disallow: /catalog/

Disallow: /customer/

Disallow: /sendfriend/

Disallow: /review/

Disallow: /*SID=

Disallow: /*?

# Disable checkout & customer account

Disallow: /checkout/

Disallow: /onestepcheckout/

Disallow: /customer/

Disallow: /customer/account/

Disallow: /customer/account/login/

# Disable Search pages

Disallow: /catalogsearch/

Disallow: /catalog/product_compare/

Disallow: /catalog/category/view/

Disallow: /catalog/product/view/

# Disable common folders

Disallow: /app/

Disallow: /bin/

Disallow: /dev/

Disallow: /lib/

Disallow: /phpserver/

Disallow: /pub/

# Disable Tag & Review (Avoid duplicate content)

Disallow: /tag/

Disallow: /review/

# Common files

Disallow: /composer.json

Disallow: /composer.lock

Disallow: /CONTRIBUTING.md

Disallow: /CONTRIBUTOR_LICENSE_AGREEMENT.html

Disallow: /COPYING.txt

Disallow: /Gruntfile.js

Disallow: /LICENSE.txt

Disallow: /LICENSE_AFL.txt

Disallow: /nginx.conf.sample

Disallow: /package.json

Disallow: /php.ini.sample

Disallow: /RELEASE_NOTES.txt

# Disable sorting (Avoid duplicate content)

Disallow: /*?*product_list_mode=

Disallow: /*?*product_list_order=

Disallow: /*?*product_list_limit=

Disallow: /*?*product_list_dir=

# Disable version control folders and others

Disallow: /*.git

Disallow: /*.CVS

Disallow: /*.Zip$

Disallow: /*.Svn$

Disallow: /*.Idea$

Disallow: /*.Sql$

Disallow: /*.Tgz$

More Robots.txt examples

Block Google bot from a folder

User-agent: Googlebot

Disallow: /subfolder/

Block Google bot from a page

User-agent: Googlebot

Disallow: /subfolder/page-url.html

Common Web crawlers (Bots)

Here are some common bots in the internet.

User-agent: Googlebot

User-agent: Googlebot-Image/1.0

User-agent: Googlebot-Video/1.0

User-agent: Bingbot

User-agent: Slurp # Yahoo

User-agent: DuckDuckBot

User-agent: Baiduspider

User-agent: YandexBot

User-agent: facebot # Facebook

User-agent: ia_archiver # Alexa

The bottom line

Configuring Robots.txt is the first step to optimize your search engine rankings, as it enables the search engines to identify which pages to index or not. After that, you can take a look at this guide on how to configure Magento 2 sitemap. If you want a hassle-free solution that works right out of the box for your store with easy installation, check our SEO extension out. In case you need more help with this, contact us and we will handle the rest.

Source: How to Configure Magento 2 Robots.txt file? – Mageplaza